Data-Driven Music and Installations

My research and creative practice explores how sonic representation of data can function as both aesthetic experience and critical inquiry. This work sits at the intersection of composition, performance, data sonification, and spatial audio, using computational methods to transform datasets into musical structures while communicating data.

My work explores data as a material that informs and/or generates compositional or sonic decisions. I create data-driven compositions, installations, and performances that translate embodied, environmental, biological, social and more data into sound. It draws upon data sonification, the process of converting data into sound to help humans understand it using a variety of auditory parameters such as pitch, volume, pattern, and timbre to represent data, methods to work between developing auditory displays, aesthetic data sonification work, and affective representation of data.

Questions: What does it means to sonify and collaborate with data as a listening practice, what data gets counted, and how data-riven music can be used as a way to communicate complex social and ecological concerns?

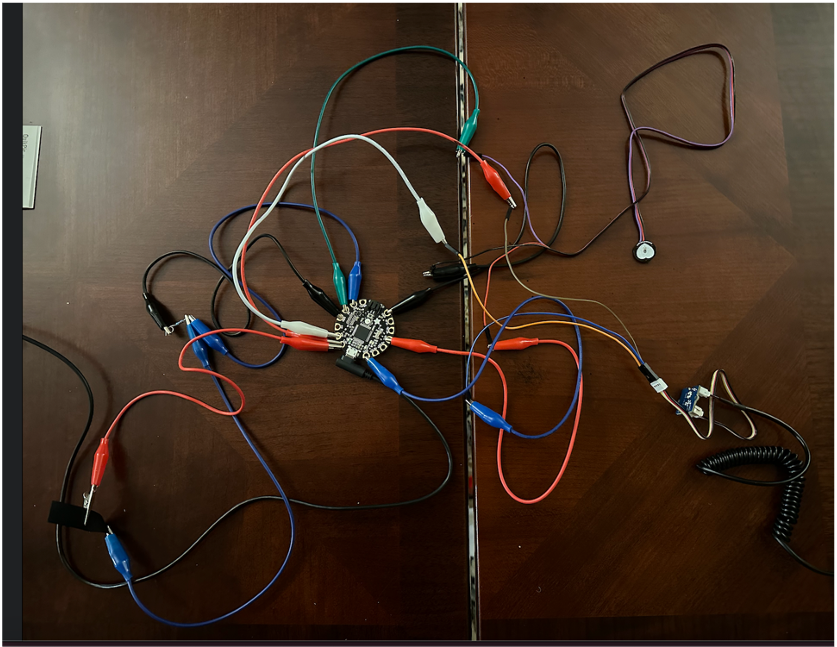

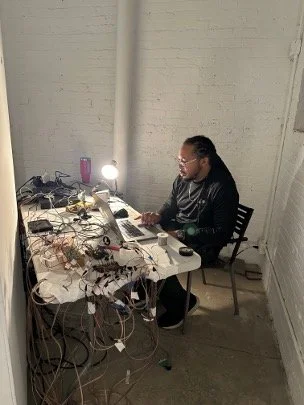

To make data-driven music I build electronic instruments, wearable electronics, interactive systems, and sensors to translate data into sounds. My compositional and performance approaches are listed below. To show my body of work,

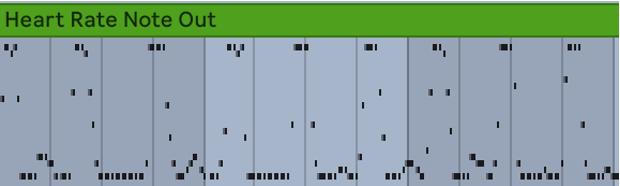

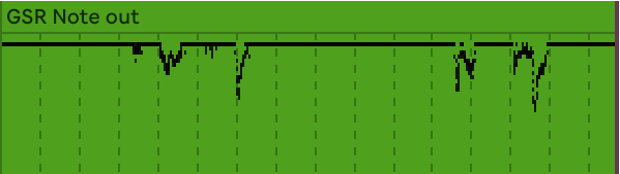

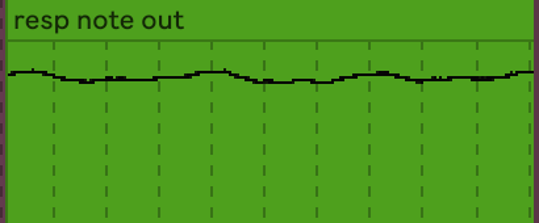

I have been cultivating a bioartstic practice that enables me to listen to my biological signals and interact with a fuller array of embodied states in a way that is inviting and instills collaboration and puts my work in discourse with works of the past. I have established a theoretical framework, historical inquiry, and practice-led research that has led me to define three modes/methods of listening to biological signals: Situated Listening, Embracing Irregularity, and Listening Beyond the Ear. These modes of listening to biological signals continue to inform my work, which is characterized by the construction and design, theoretical exploration, and performance of wearable electronics that capture and translate biological data into immersive sonic and visual environments. The modes of listening have also informed all of my data-driven work and how I approach data-driven music.

bioMusic

Publications

Smith, Alexandria. Voices in the Skin. John Zorn (Ed.), Arcana X: Musicians on Music. 2022. New York, NY: Hips Road/Tzadik.

Smith, A. 2025. Modes of Listening: Practice-Led Perspectives on Performing Biofeedback Music. Proceedings of the 50th International Computer Music Conference. (displayed here)

“Pauline Oliveros and Biofeedback.” American Musicological Society. Chicago, IL. November 2024.

Smith, A. R. (2023). Modes of Listening: Practice-led Perspectives on Music and Biofeedback. UC San Diego. ProQuest ID: Smith_ucsd_0033D_22778. Merritt ID: ark:/13030/m56j5dn4. Retrieved from https://escholarship.org/uc/item/2sj28114

Interfaces

Voices in the Skin 3

Sonifying Data Centers

stay tuned! We are working on more projects!

Data centers provide critical infrastructure needed to house servers, data storage drives, and network equipment that power the internet, process, and distribute data, and train, deploy, and deliver AI applications and services. While they provide these services to people throughout the world, they carry drastic and focused social and environmental consequences. These span from causing local power bills to become more expensive as they stress the electrical grid, cutting the water supply to local communities, taking up large amounts of land mass while providing few jobs or resources, such as food or other goods to communities, pollute air the air, and are known to bring emit a constant, audible low hum that disturbs local wildlife and people living close to them.

“all because we can” is a reflection on the rapid investment into and construction of data centers since the AI boom that began in the late 2010s. The piece features a steady counterpoint of data sonification that superimposes the number of data center facilities that have been active, under construction, and announced from 2000, projecting to 2048, alongside their total power capacity from those years.

The city I live in, , and more broadly, are considered hot spots for AI data centers because companies can acquire land, water, and electricity at low costs. The people who profit from these data centers and/or access them to generate text, music, etc, are often far removed from the social and environmental costs that these technologies take. I often wonder if the environmental and ecological impact is worth having the convenience of LLM’s or other generative AI usages that require large models.

“all because we can” for trumpet, interactive visuals, and data sonification (2025-2026)

Alexandria Smith (data driven music) and Kyle Smith (Visuals)

Implemented in third-order ambisonics, the work engages with parameter-mapping sonification strategies and spatial audio techniques to communicate the environmental and societal consequences of unregulated rapid expansion. This composition was authored and data was mapped using Max MSP. The sound engine was designed in Ableton Live 12 using custom software instruments and IEM Plug-in Suite was used for spatialization). For the live performance on trumpet (with silent brass mute), I use the Max ICST external to perform spatialize my sound live. This composition can be performed ambisonically, in 8.1 or in 4.1.

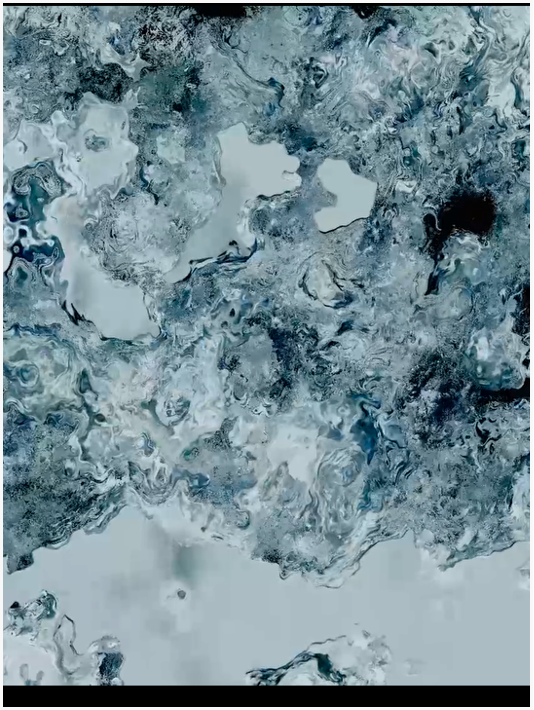

The visual layer is staged as a megalithic facade that fills the frame and functions as a brutalist abstraction of resource availability. What reads as solid and immutable at first is gradually depleted as rising demand steadily consumes the sense of available resources. They serve as a guide alongside the improvised performer to communicate the effects and material consequences of resource depletion that this rapid expansion will cause if not mitigated.

The visuals were implemented as a real-time OpenGL Shading Language fragment shader in TouchDesigner. All data was received locally via Open Sound Control (OSC) via the the data sonification max patch. The amount of planned and ongoing construction introduces directional tearing via anisotropic warping. Facility count increases the density of the erosion detail, and power demand biases the cutoff as an additional stress term.

if we stay still (2025-2026)

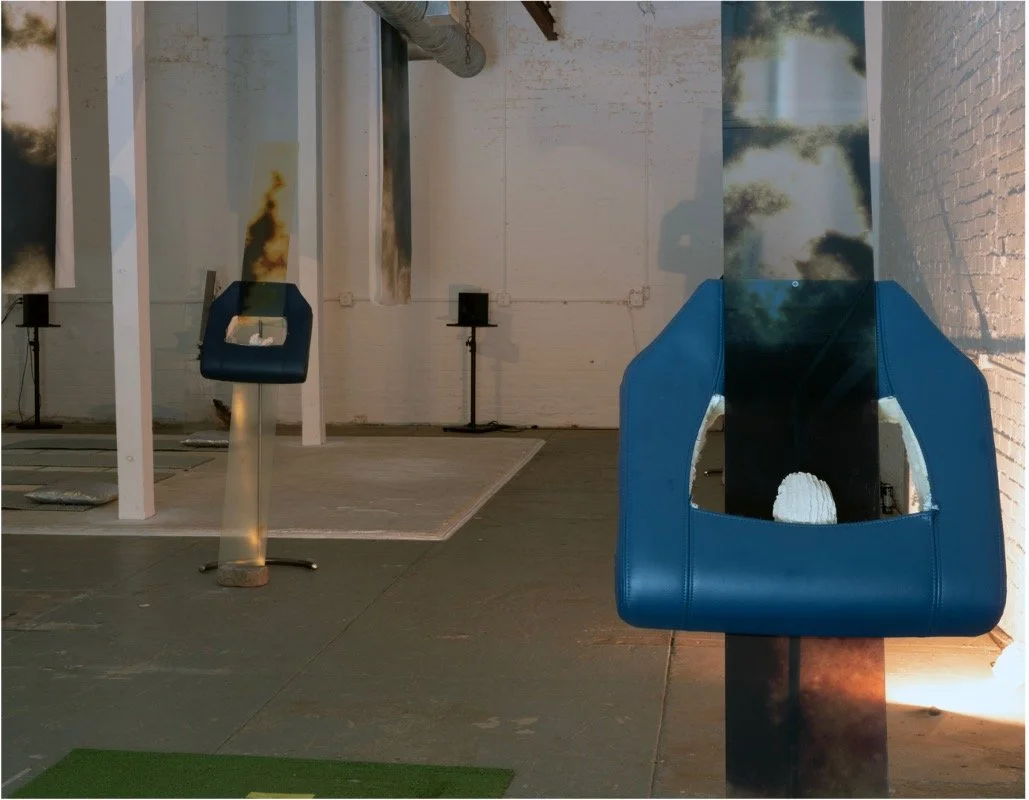

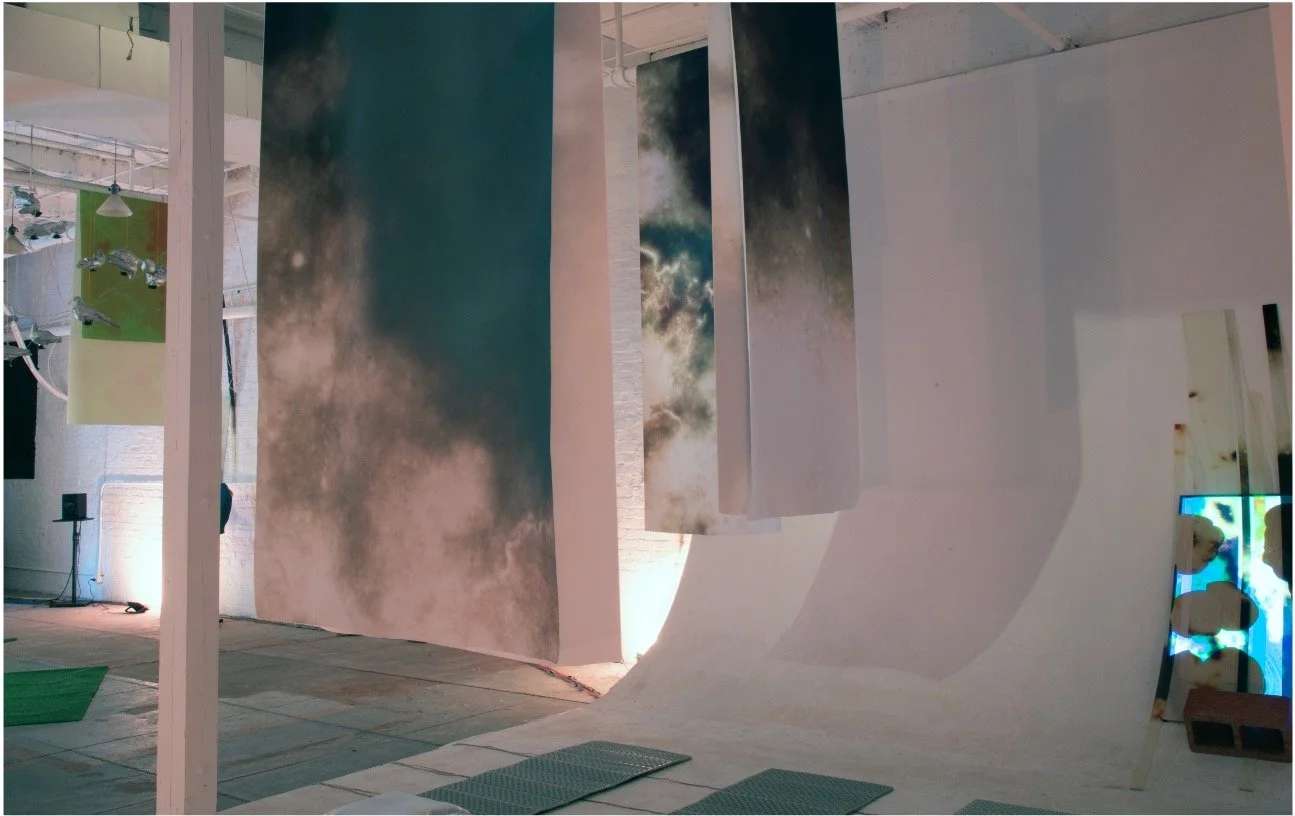

Exploring how our patterns of movement impact the world we live in, If we stay still uses sound, video, sculpture, and live musical performance to create an immersive, interactive environment in which stillness and action feel both unnervingly impossible and urgently necessary. This installation reflects on the potential of slowing our movement throughout the planet while speculating on what the planet might look and sound like in a geo-engineered future.

A collaboration between artist / researcher / filmmaker Jeremy Bolen, music technologist / performer-composer / researcher Alexandria Smith, and composer / sonic artist Katherine Young, this installation speculates on a not-too-distant future where the sky has been engineered to block out the sun and once extinct species (the passenger pigeon) have been brought back through de-extinction methods.

The evening soundscape recording was made at the Hard Labor Creed Observatory in Georgia, on the homelands of the peoples of the Muscogee (Creek) and Cherokee nations.

Visitors are invited to explore and interact with the space to see how their movements and positions change the sonic environment. The work has been presented at the Goat Farm’s SITE (2025) and the Science Gallery (Atlanta).

ATL ART Week Goat Farm Exhibition (Sept 2025) — 3500 visitors.

ATL Science Gallery (Sept 2024-April 2025) - 9,100 visitors

Installation Assistance:

TeAiris Majors (Audio System Development Assistant) - Goat Farm

Devon Green - Goat Farm

Evan Maloney - Goat Farm

Renny Hyde - ATL Science Gallery

Mir Jeffres - ATL Science Gallery

Performers:

Chris Childs - percussion

Deisha Oliver - cello

Monique Osario - voice

Sensor Design and Fabrication

I designed the embedded velostat pressure sensors that would measure the pressure of visitors interacting with the turf (under the speaker pigeons - more below) and camping mats. Readings were taken by arduino. The Sound engine was designed in Max MSP. Sensors would trigger Katie Young’s composition through the pigeon speakers and interact with the field recording in the main speaker array. TeAiris Majors assisted in the Max Patch design and implementation.

Pigeon Speakers

We designed a circuit that would power 12 pigeon speakers and collaborated with Jeremy Bolen on mounting them into his fabricated pigeons. The pigeon speakers sonified Katie Young’s composition/triggers and the interaction between the visitors and the sensors embedded in the turf. Devon Green assisted with the speaker circuit.